GCP: Functions - Connectivity, Security, Visibility with Aviatrix

Table of Contents

Description

A customer recently asked me about extending his existing Aviatrix environment from Azure to GCP.

This came with a small caveat.

In GCP he is using Functions.

One of those functions needs to:

- reach a backend in Azure

- be accessed from the Internet

- be accessed by customers landing over Site2Cloud connections on Spokes

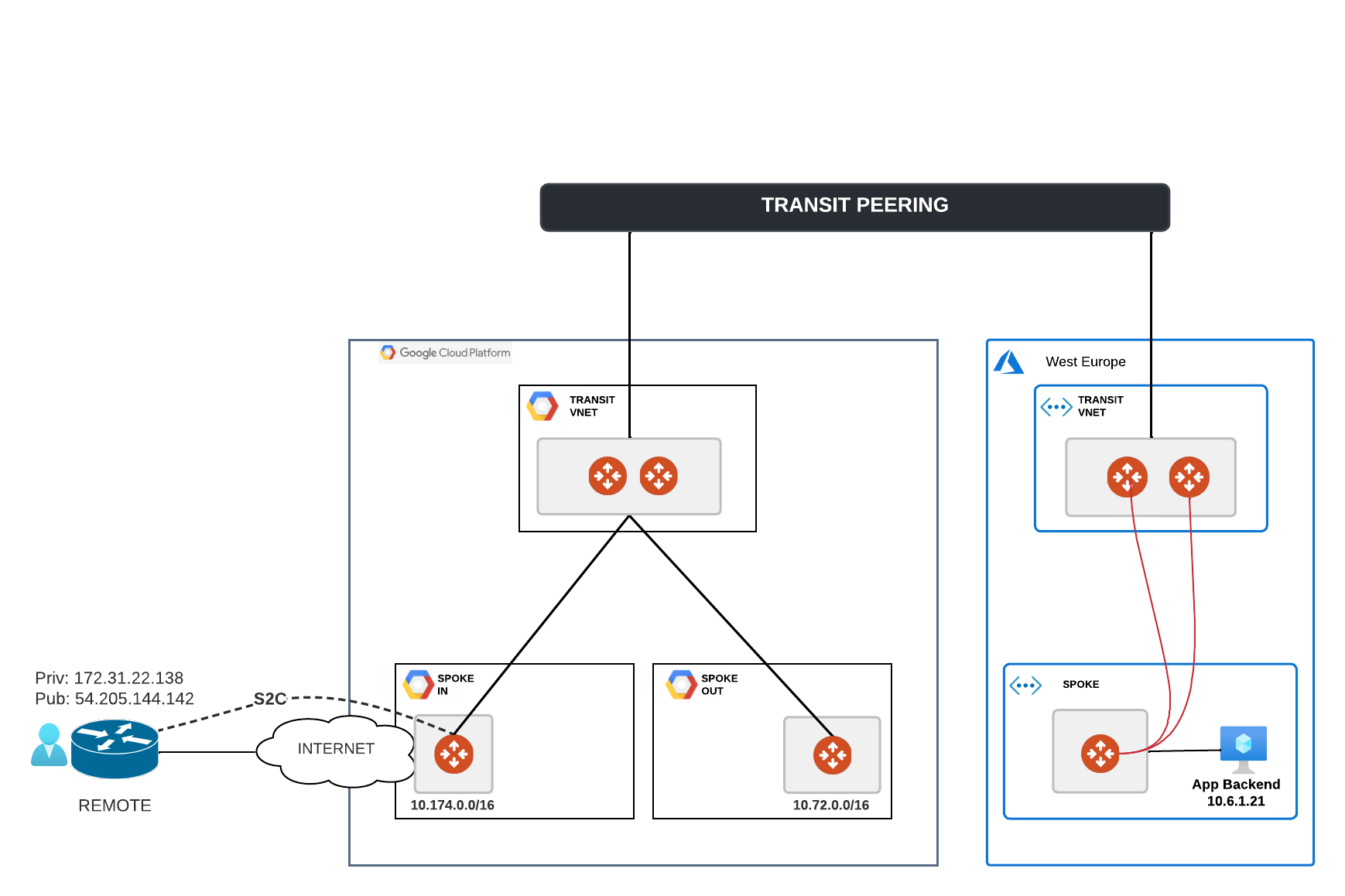

My initial lab setup for this scenario looked similar to this

Can you see the challenge here?

Wherever I would deploy a GCP function, it would just live outside the VPC by default.

I would NOT be able to control where traffic flows.

NOT able to easily apply various layers of security to it.

Have NO straightforward and consistent way to monitor what happens in real time and take measures in case I need to troubleshoot and fix its functionality.

I would be walking blind in the dark and get annoyed with the whole process.

For any challenge there is a solution :)

That’s the reason I chose to be a techie.

Overview

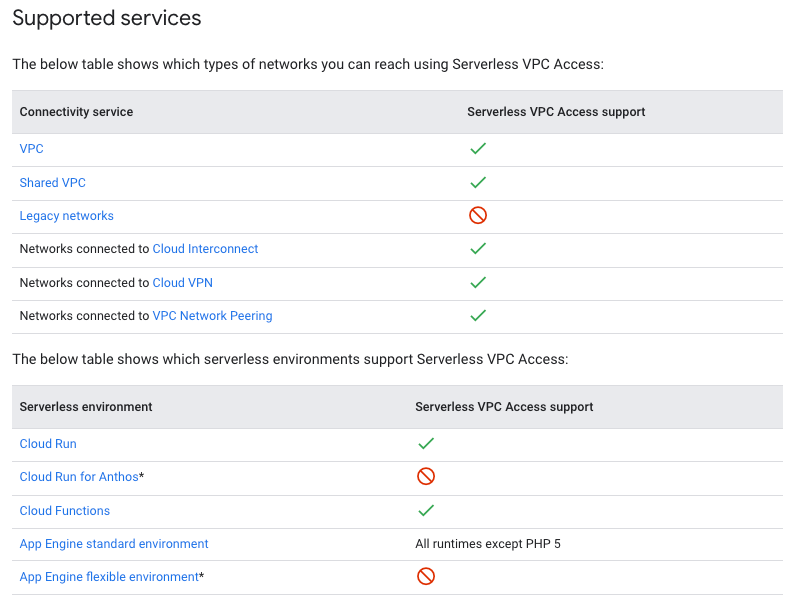

While reading up on Google Functions and the serverless concept I stumbled upon this link Serverless VPC Access.

It very well describes, sometimes too extensively for my rather impatient nature, a way to configure that things like Cloud Run, Functions can access entities living inside/outside Google and these can be:

- VPCs

- Network behind Cloud Interconnect (OnPrem)

- peered VPCs

- Cloud VPNs

By now you might be wondering the same thing that I was after having read the official docs.

This seems to be just Egress Access from the GCP function via a VPC Route table.

It works well to reach the backend of the function hosted in Azure.

What about Ingress to access the function from a remote client over S2C ?

Here goes another GCP functionality that I stumbled upon: Private Services Connect.

This one deploys an Endpoint (/32 IP address) directly inside a VPC.

You can read more about it here: PSC

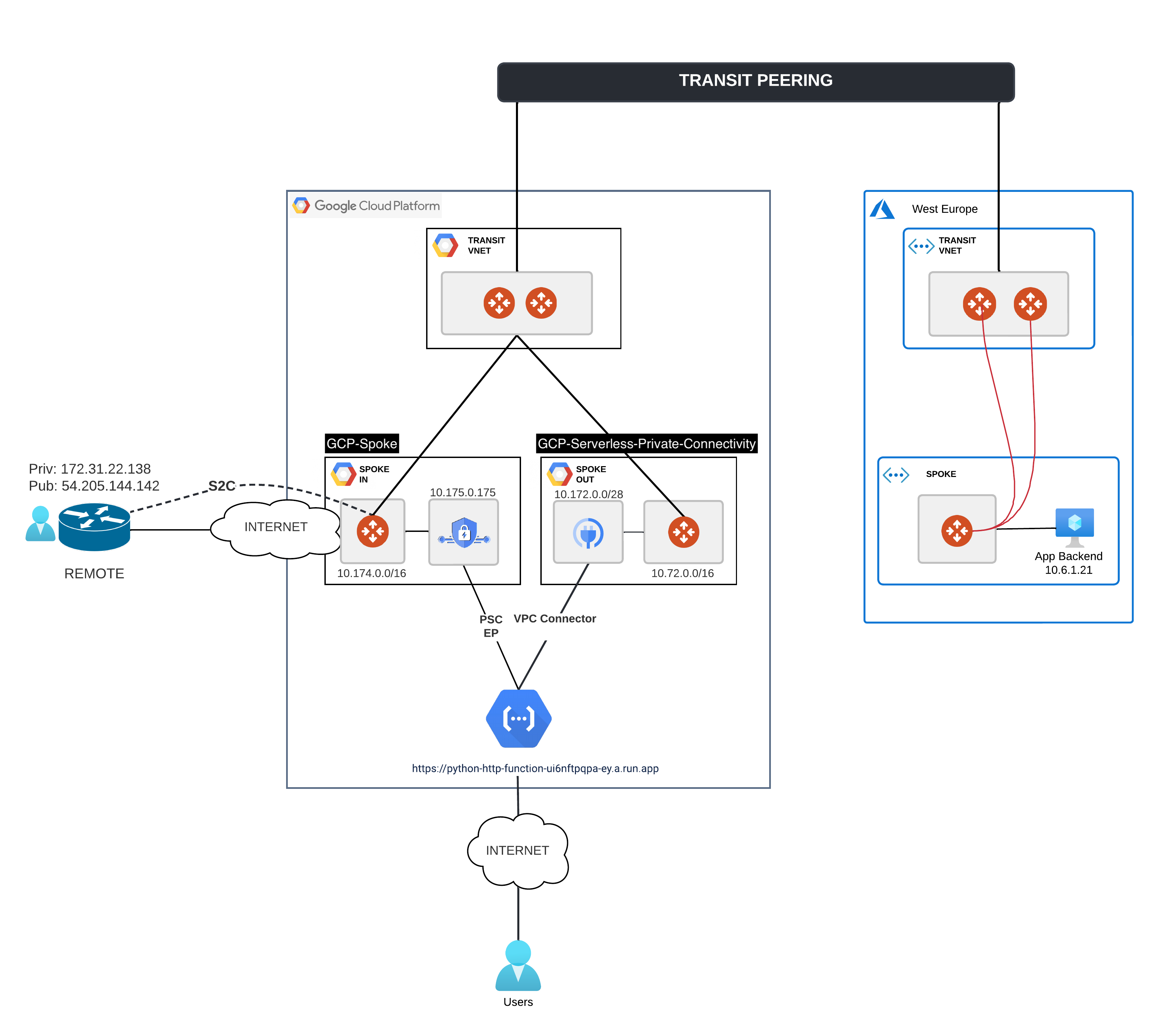

If we glue these two pieces together, the Ingress and the Egress then the design I am trying to reach is this one:

And the traffic flow E2E would be:

How to set up a GCP Serverless Function

Google has quite cool code samples for this purpose on their github repo.

This made my whole work a lot easier.

I hate starting from 0 and lurking around finding proper syntax and semantics for code.

git clone https://github.com/GoogleCloudPlatform/python-docs-samples.git

cd python-docs-samples/functions/helloworld

vim main.py

In main.py I changed the code of the hello_get() function and added a simple request to my backend VM (10.6.1.21 - VMQA), behind Aviatrix Spokes, inside Azure:

def hello_get(request):

"""

VM Azure - QA1 - 10.6.1.21

"""

URL = 'http://10.6.1.21/NOTHING/index.html'

r = requests.get(url=URL)

return 'I got this {}'.format(escape(r.content))

I already had the gcloud CLI setup and for pushing this function to GCP I used:

gcloud functions deploy python-http-function –gen2 –runtime=python310 –region=europe-west3 –source=. –entry-point=hello_get –trigger-http –allow-unauthenticated –vpc-connector projects/prjbetaaviatrixgcp/locations/europe-west3/connectors/serverless-connector

Don’t worry about the VPC-Connector part at the end.

We will configure it in the Egress part.

This is where you get that Name/Path from.

For now you can deploy it without and then edit it in GCP Web UI and map the Egress Connector later.

After having pushed the function you can see the DNS name under which it is running by looking at the gcloud functions command output:

uri: https://python-http-function-ui6nftpqpa-ey.a.run.app

This will always end up in .run.app.

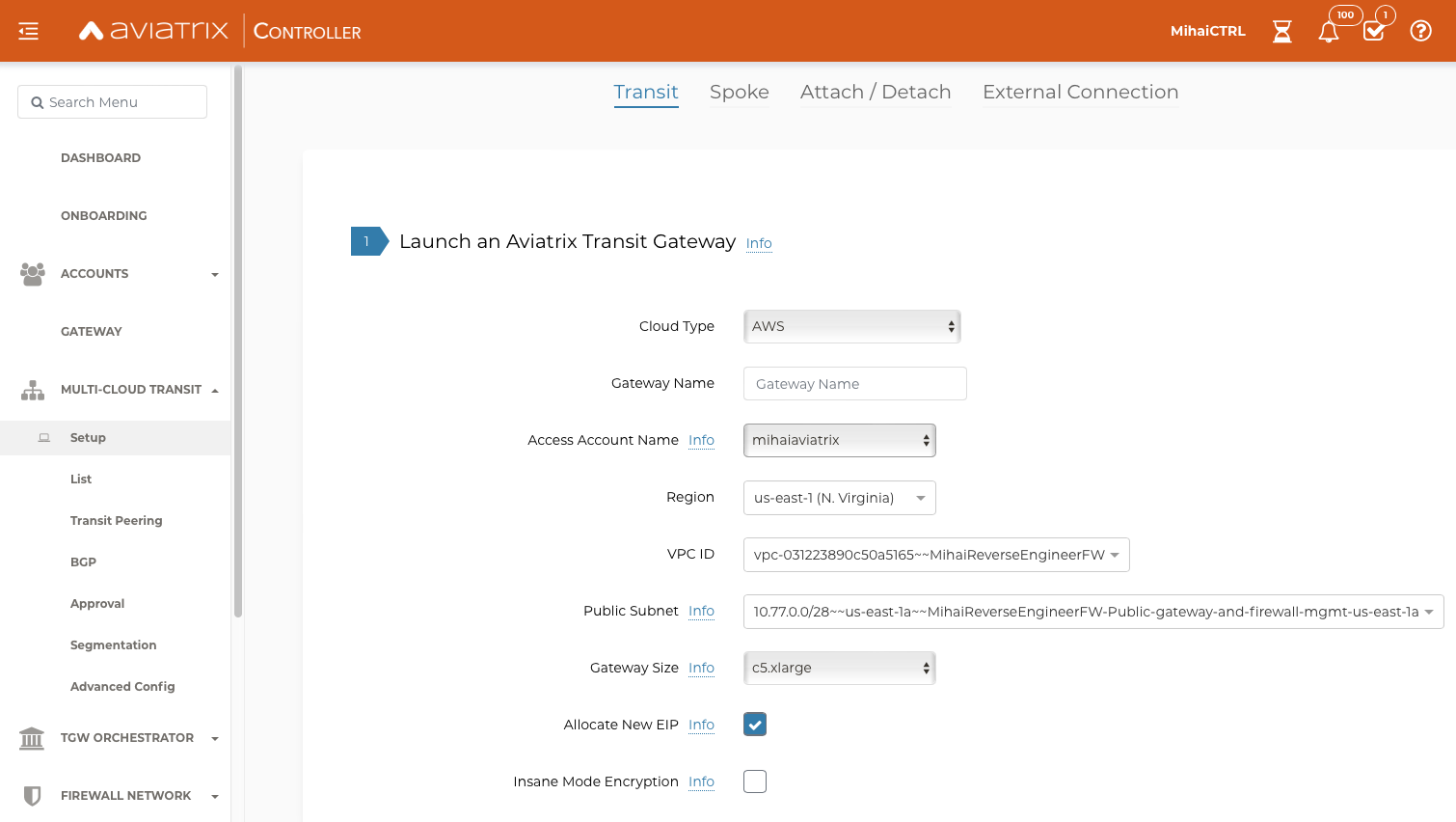

Aviatrix Secure MultiCloud

I already had deployed an Aviatrix Multicloud Infrastructure between Azure and GCP as I wanted a single, consistent, cloud agnostic environment and no custom CSP limitation to stand in my way.

This can be done via the UI, quite easily:

or via Terraform in just a few lines by using these modules:

You can find a sample code for building an AWS-Azure-GCP MultiCloud Architecture here:

https://github.com/mihaime/3-clouds-spoke-transit-vms

Thanks go to my colleague Frey for having helped me set it up.

Frey’s Rep

Easy to build infra, secure & consistent across clouds

GCP Function

GCP Ingress

For access from my remote client ( connected over S2C + BGP to a landing Spoke) to the GCP Function I require:

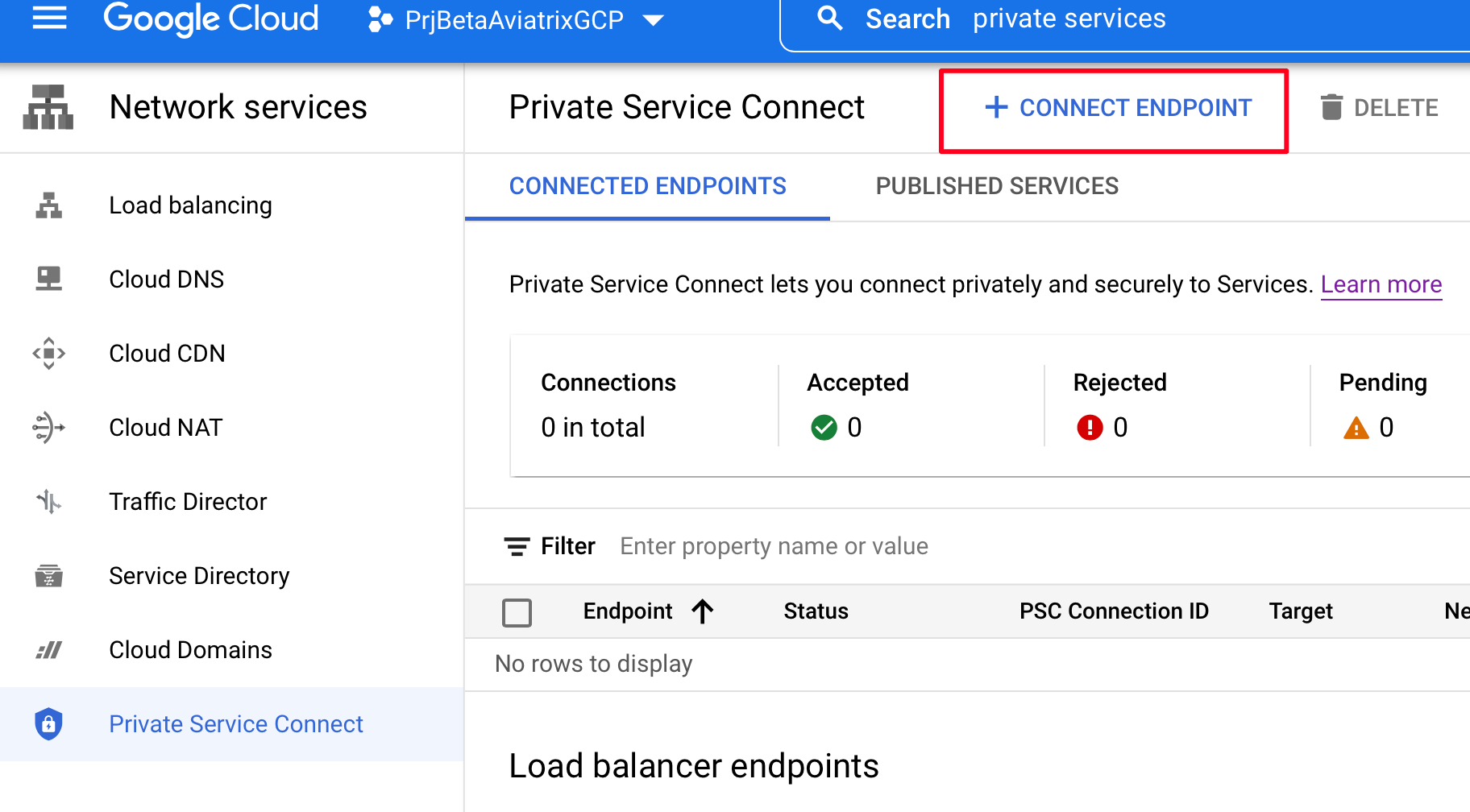

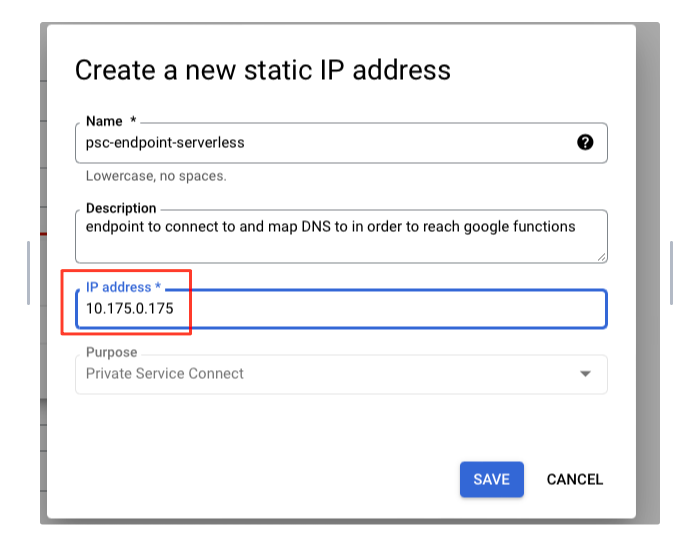

- a /32 (10.175.0.175) separate from the subnets already existing in the VPC

This is a strict requirement. - I need to advertise reachability to it inside the Aviatrix Network and via S2C

Custom Spoke Advertised VPC CIDRs - The remote client needs to have a DNS mapping (static or dynamic)

Function URL -> 10.175.0.175

Private Services Connect - Endpoint /32

A picture is worth more than a thousand words so I let the images speak for me.

Enable Private Google Access for the Subnet:

Create the /32 Endpoint:

Custom Spoke Adv VPC CIDRs

Advertise 10.175.0.175 both inside the Aviatrix Network as well as via BGP to the S2C Remote Host:

DNS

On the Remote Host for testing purpose configure a static DNS entry in /etc/hosts:

vim /etc/hosts

# add the DNS name you took from the function deployment via gcloud CLI

10.175.0.175 python-http-function-ui6nftpqpa-ey.a.run.app

Assigning a Private Endpoint ensures that my function can be accessed from everywhere inside my Aviatrix Environment:

- other clouds

- other regions

- OnPremise locations

- Roaming Customers / Site2Cloud partners

and all this is done in an easy, straightforward and unified way.

No limitations, no exceptions, no headaches in configuration.

GCP Egress

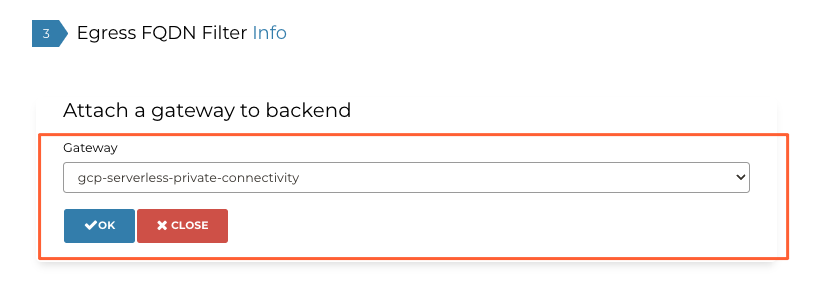

For the Function you just built to be able to access the Backend (VM QA1 - 10.6.1.21) you need to configure a serverless VPC Connector.

The VPC connector is a subnet (mandatory /28) that needs to be separate from the existing one of your Aviatrix VPC (in my case named: “gcp-serverless-private-connectivity” and having a Spoke GW in it).

There is no Endpoint visible in there that you can access directly and IPs from this subnet will ONLY be picked for the function initiated traffic, Egress toward a backend.

What do you gain by having this Connector for Egress?

First of all traffic no longer goes over Internet directly and unsupervised.

Second of all as the traffic traverses the Aviatrix Secure MultiCloud network we can:

- visualize it

- filter it (FQDN Filters)

- segment it

- Network wise (think VRFs like)

- Microsegmentation wise (think Application domains, classifiers based on labels, line-rate performance and a distributed policy model, first hop in the path)

You built the connectors both for Ingress and Egress.

You have connectivity up and running and everything flows as you wanted it to.

Time to add security in the mix…

Securing everything

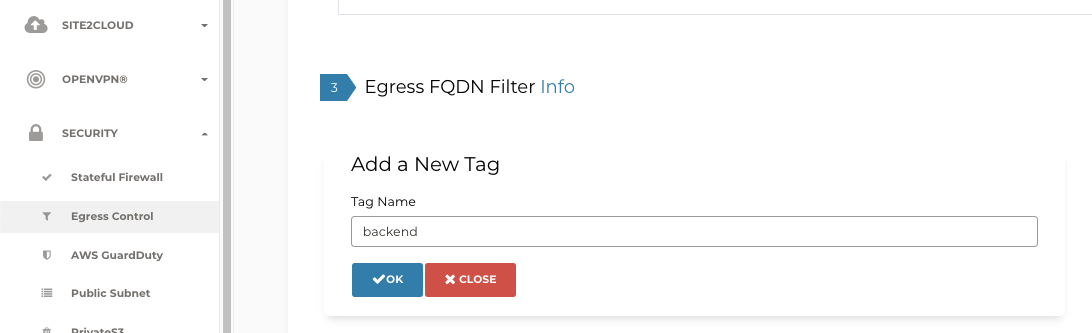

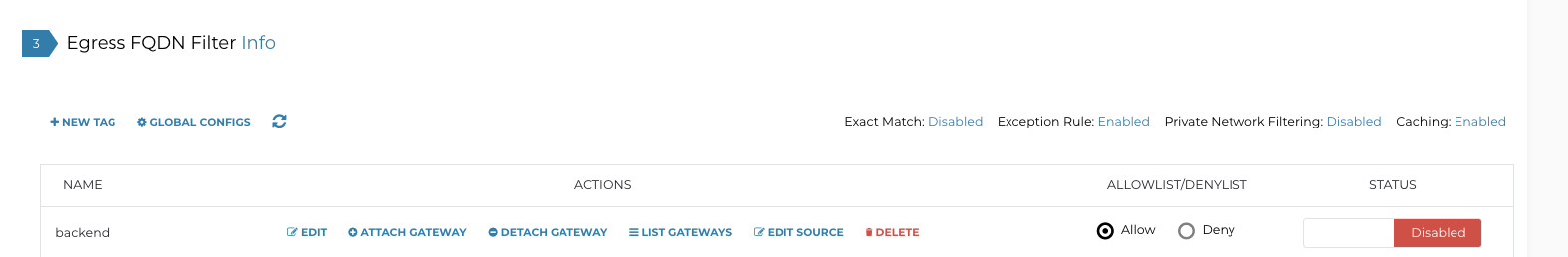

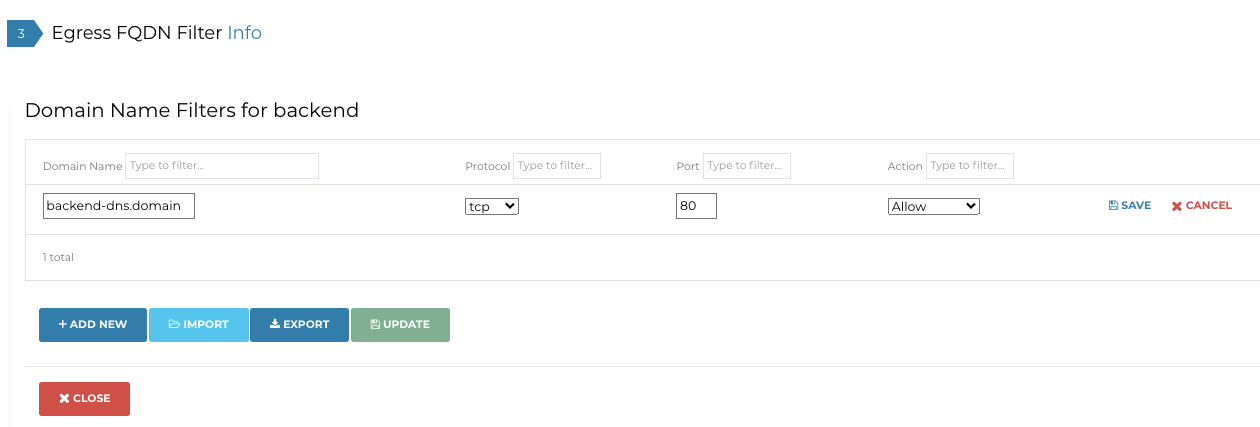

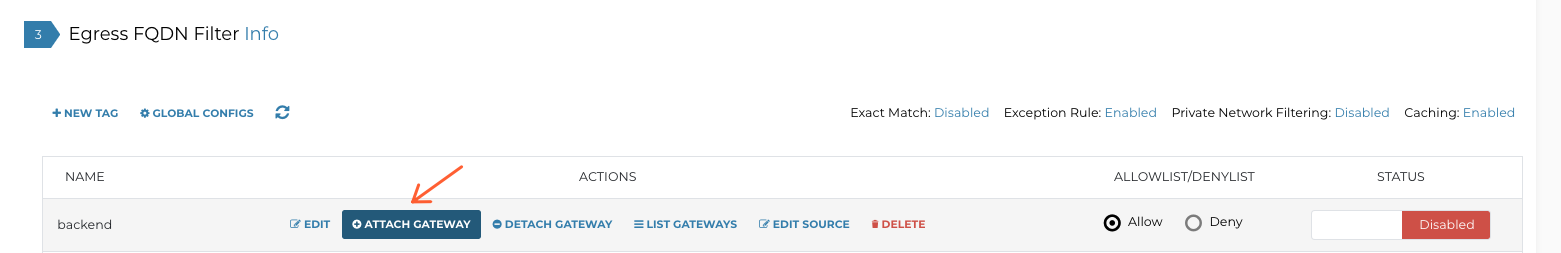

FQDN Filters

This is by far the easiest thing to configure.

The logical steps are:

- create a Tag

- map your backend domain/domains to it (in my case I use an IP for a backend but in a real world scenario you’d have a DNS name)

- add rules based on them

- attach this Tag to a GW or to GWs

If you want to also filter based on Source IP, not just Destination Domain, then you can click here on Edit Source.

Then you can add the /28 VPC Serverless Connector subnet which is where the GCP Function communication toward the Backend originates from.

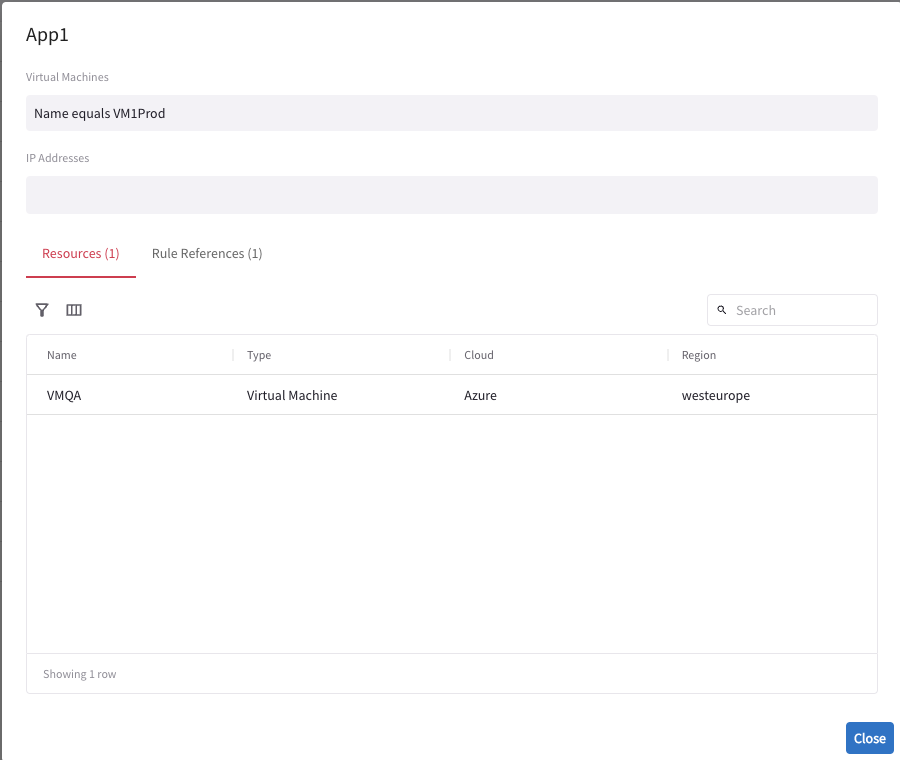

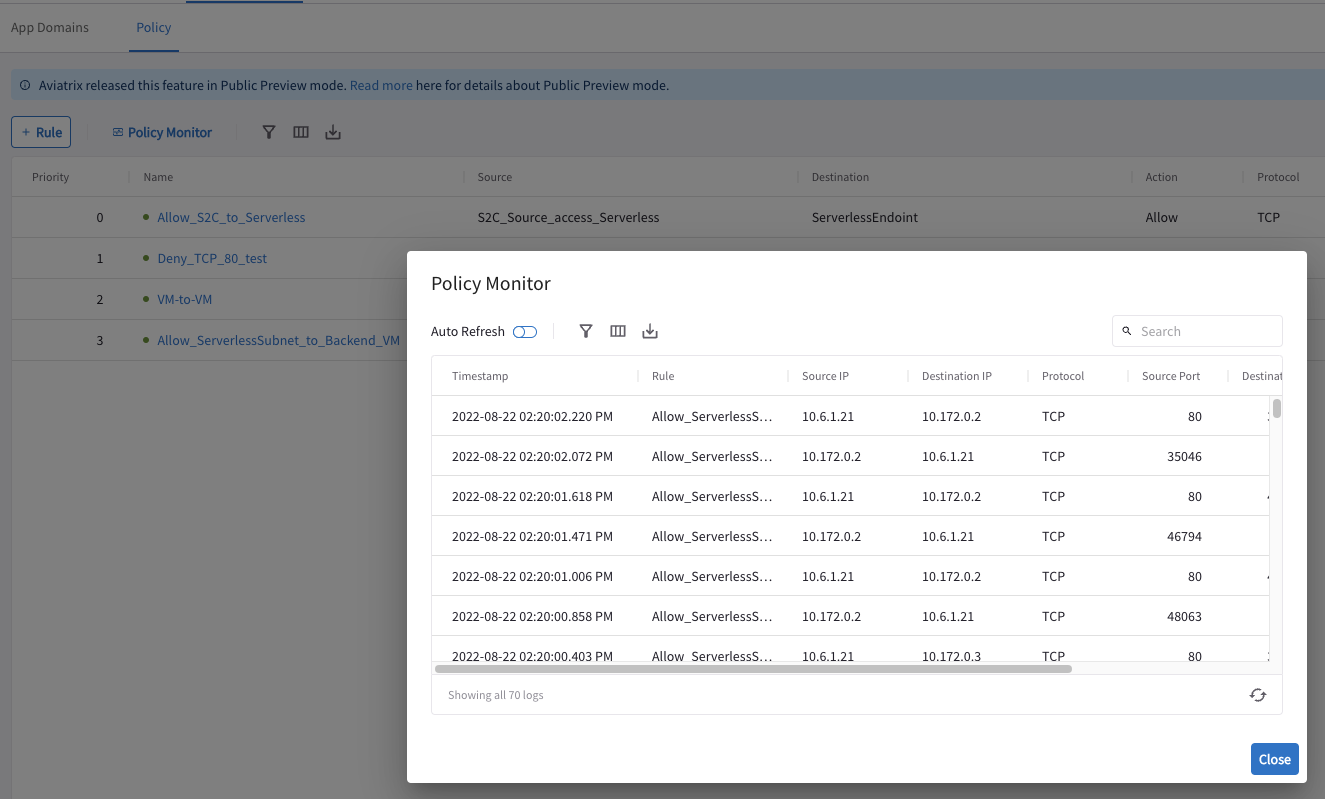

Microsegmentation

Currently only working for AWS and Azure but there is a hidden way to activate beta testing it for GCP as well.

Let me add a very short description of what this is:

- distributed Policy Model across your Multicloud Network (Ingress/Egress GW of traffic)

- you define classifiers (App Domains)

- based on VM labels

- CIDRs

- Subnets

- or a mix & match

- you define a Policy: Allow/Drop + TCP/UDP/ICMP

- you choose to have statistics, logging for it or not

- you can have it in Monitor mode just to see what would happen

Everything gets deployed in the background using eBPF, is line-rate and does NOT incur any performance penalty.

Flexibility is a key factor.

You define a policy ONCE and that policy reads Cloud Attributes from Workloads and automatically updates itself to match your deployment.

Add a new VM backend for example ?

No need to change the policy, just tag the instance the same as the existing Backends and DONE.

Create an Application Domain (a groupping of resources):

Here it is automatically adding any VM with the Tag: VM1Prod.

This is the Backend of the GCP Function.

Create another Application Domain based on CIDR (the Egress Subnet used by the GCP Function):

Create a Policy to ALLOW the communication between them

Do you need to see what traffic is matching ?

Go to Policy Monitor

Application Discovery & Recognition in Microsegmentation…stay tuned :)

Monitoring & Visibility

The Multicloud Infrastructure is deployed.

The GCP Function can be reached both Ingress and Egress.

Security is in place.

The days move on slowly and everyone is happy.

What do you do ?

CoPilot is your eyes and ears into your MultiCloud environment.

This is the first place to go to when issues arrise.

A few of the things I used the most while doing operations:

- topology

- packet captures at each hop

- flow visibility

- routing information across the network

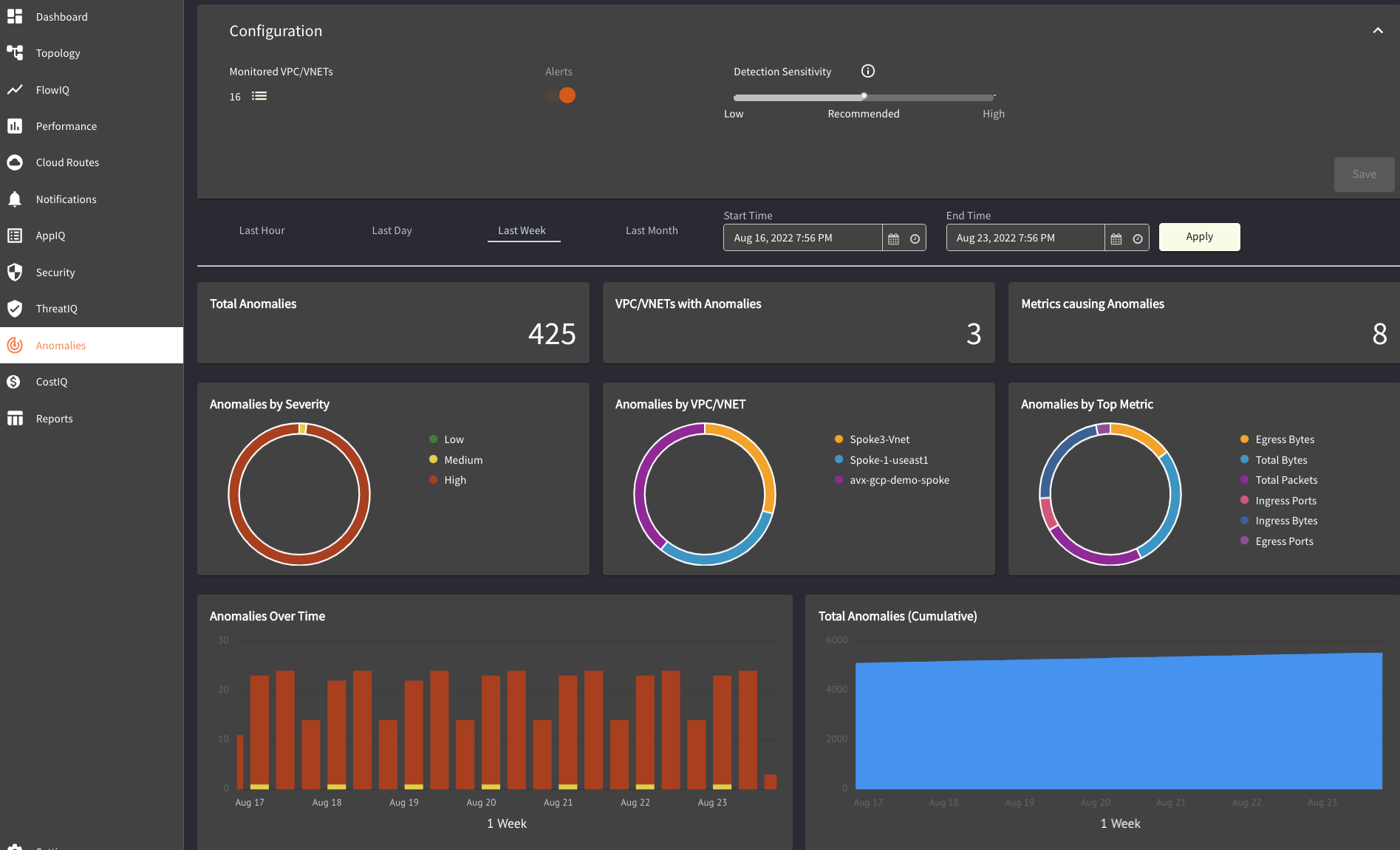

- anomaly detection

- AppIQ for seeing if Control Plane wise everything is working in the path

- Traffic Graphs and alerting based on deviations and thresholds

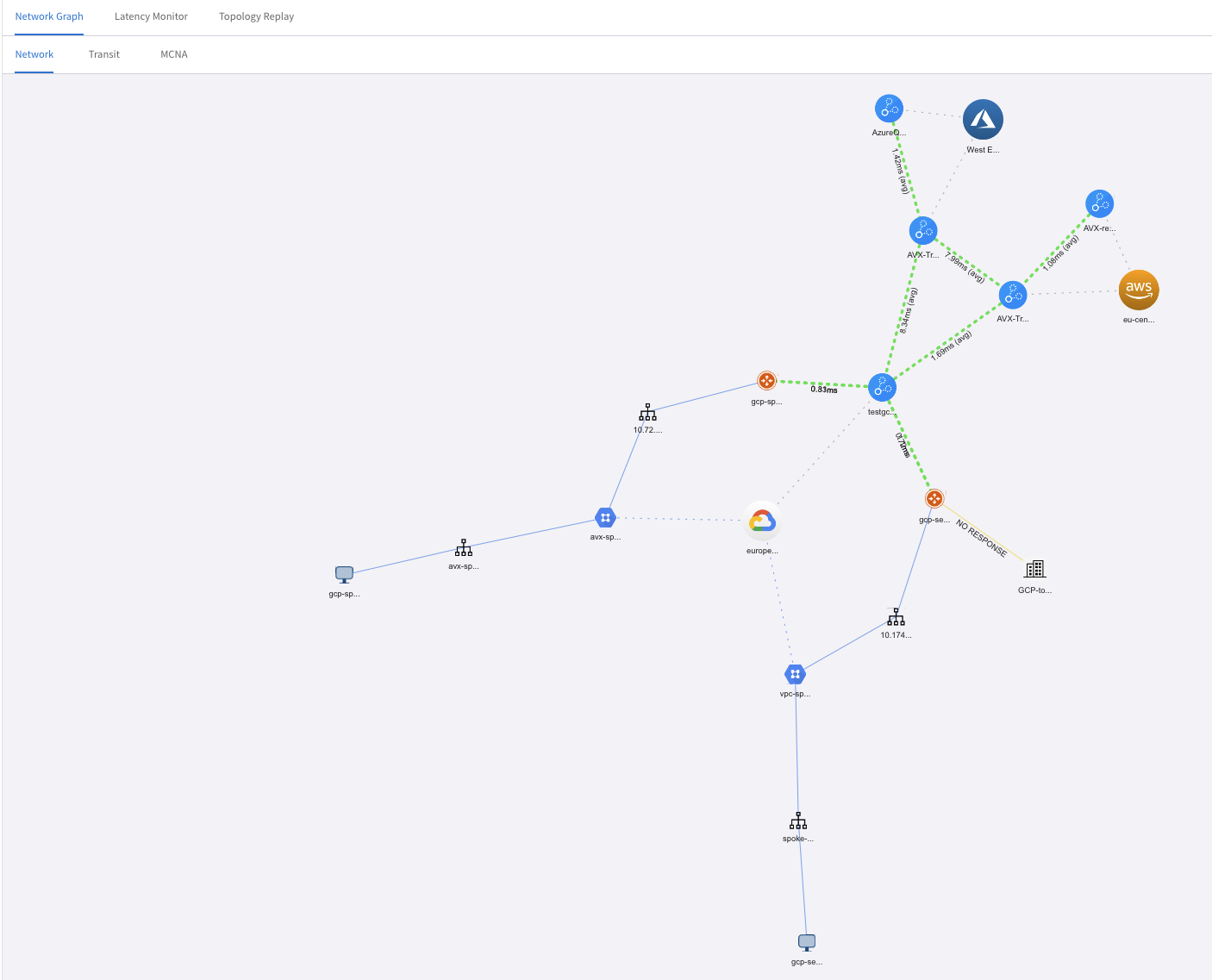

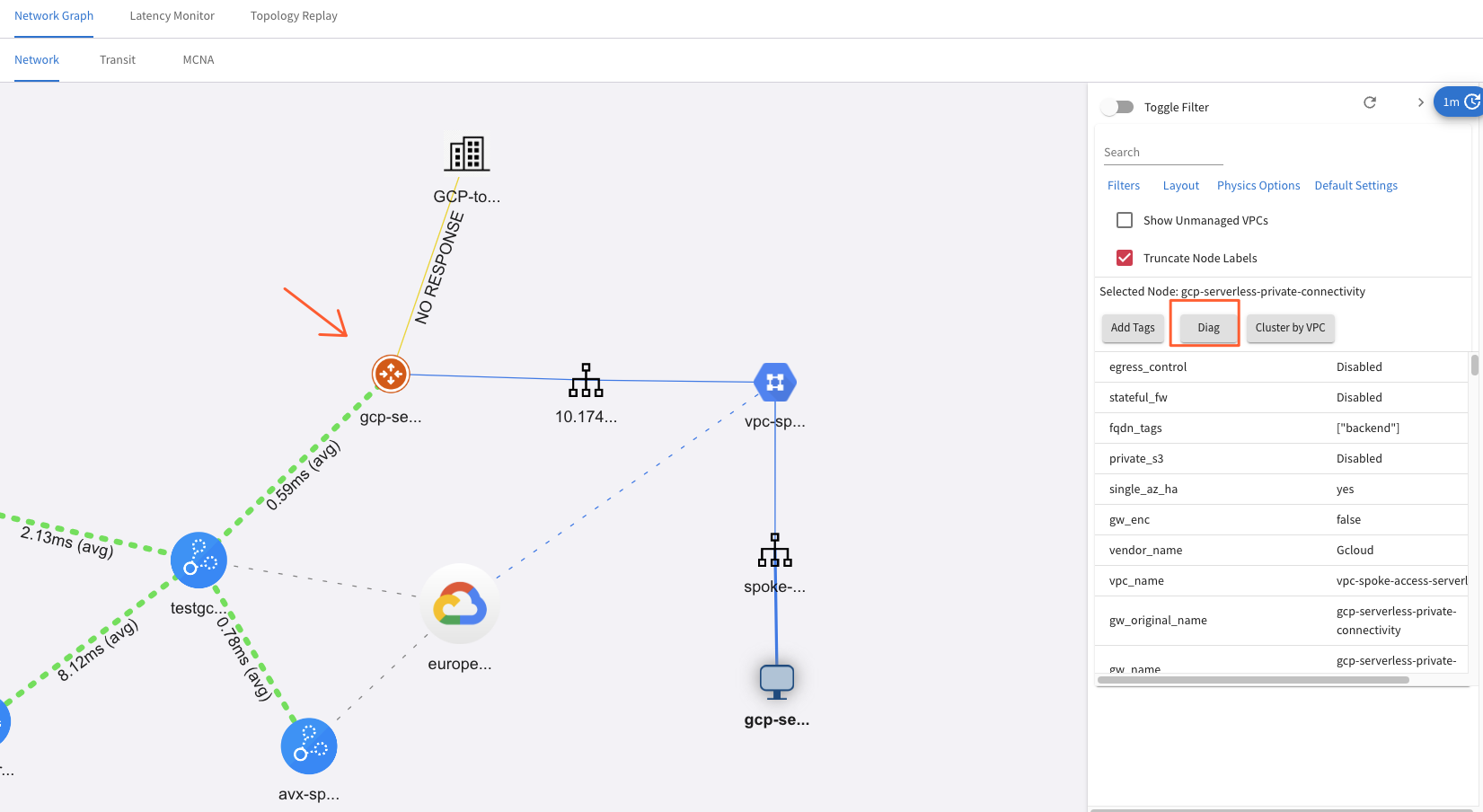

Topology

How does my network look? Where is everything deployed ? How ? Did anyone change anything ?

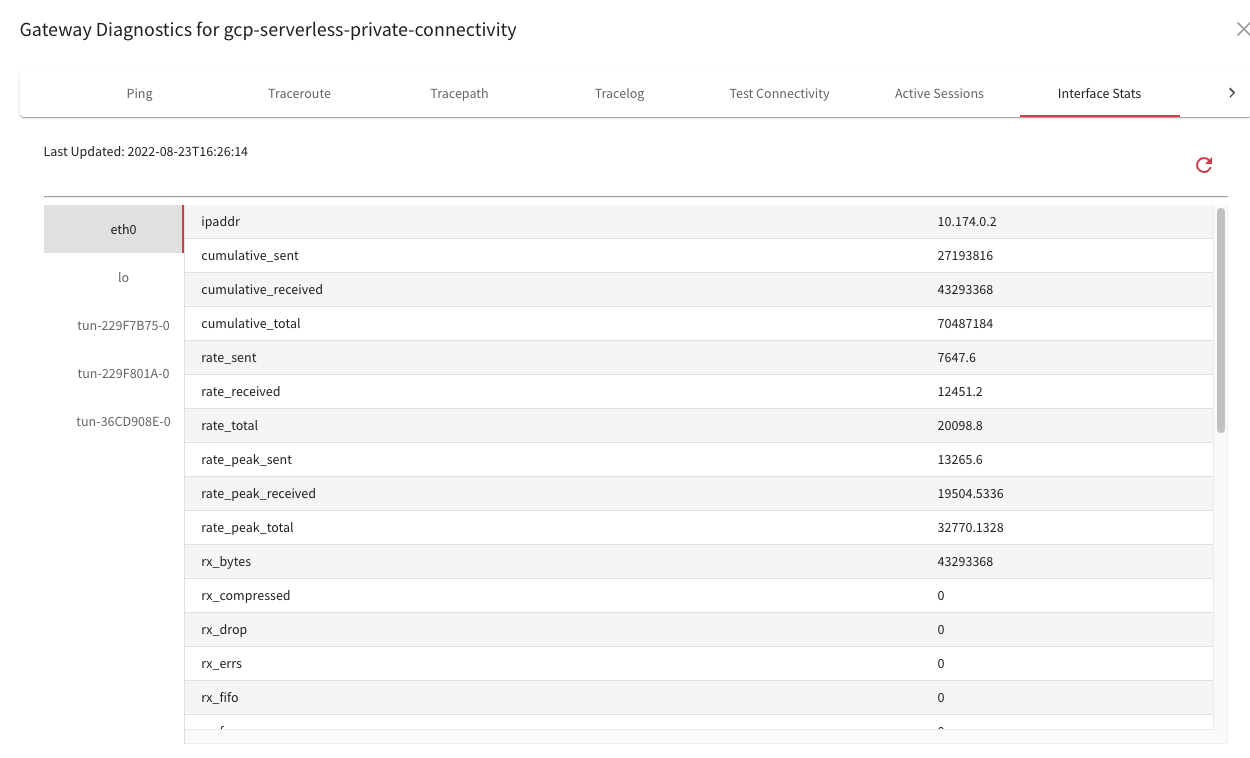

From here I can select a Gateway and go into Diag for digging deeper.

Then a whole new world for troubleshooting opens up:

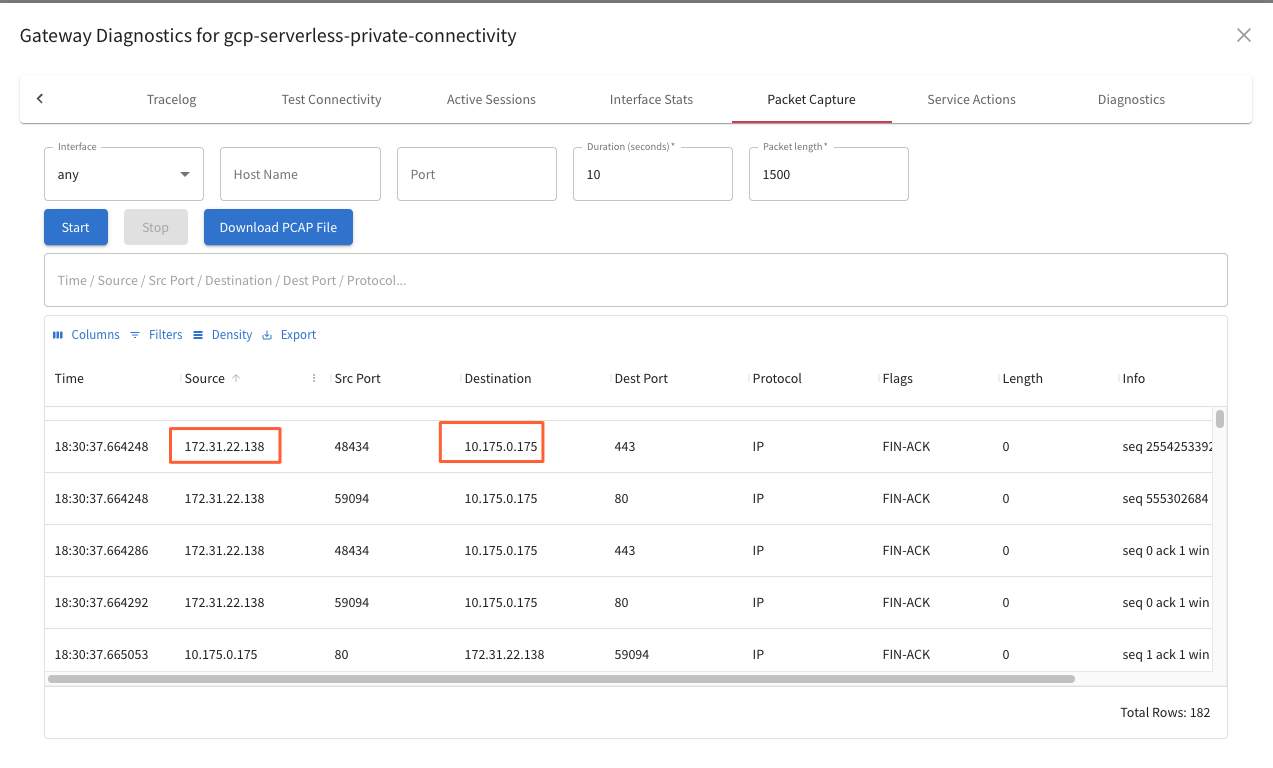

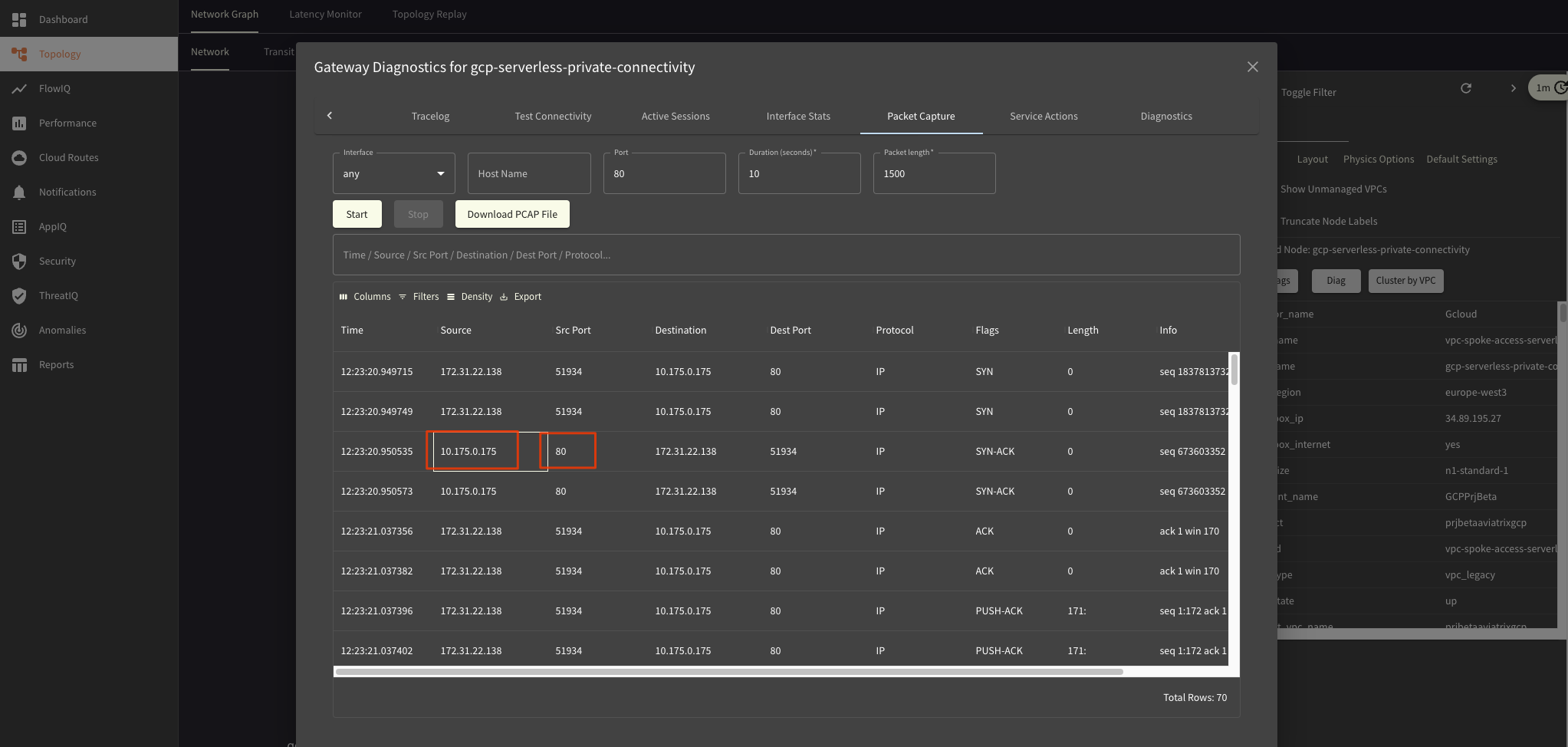

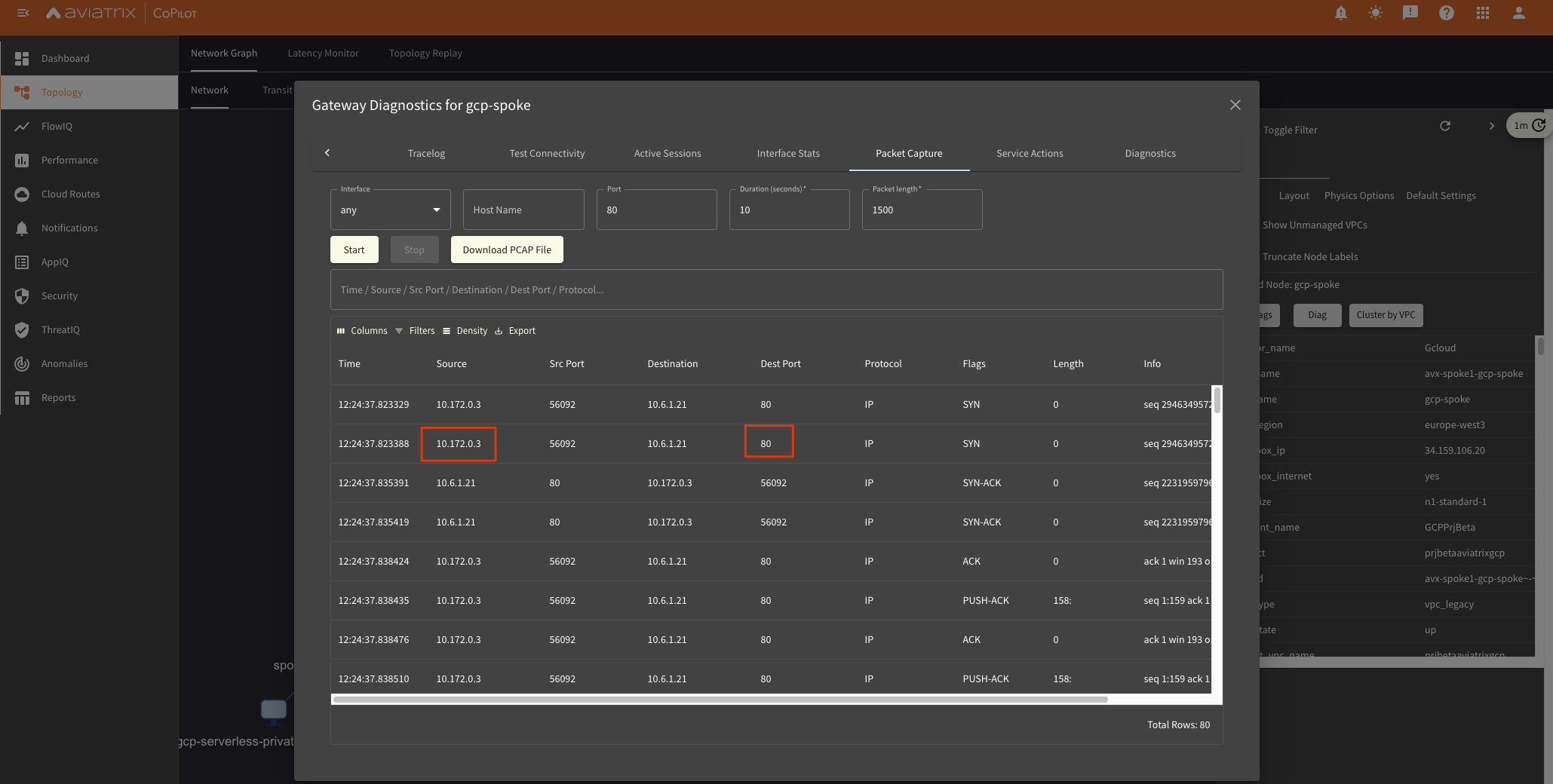

Packet Captures

We can select to do a Packet Capture at each hop in the Network:

Also export it as PCAP for seeing what happens exactly in Wireshark, analyzing headers and comparing functional to non-working traffic.

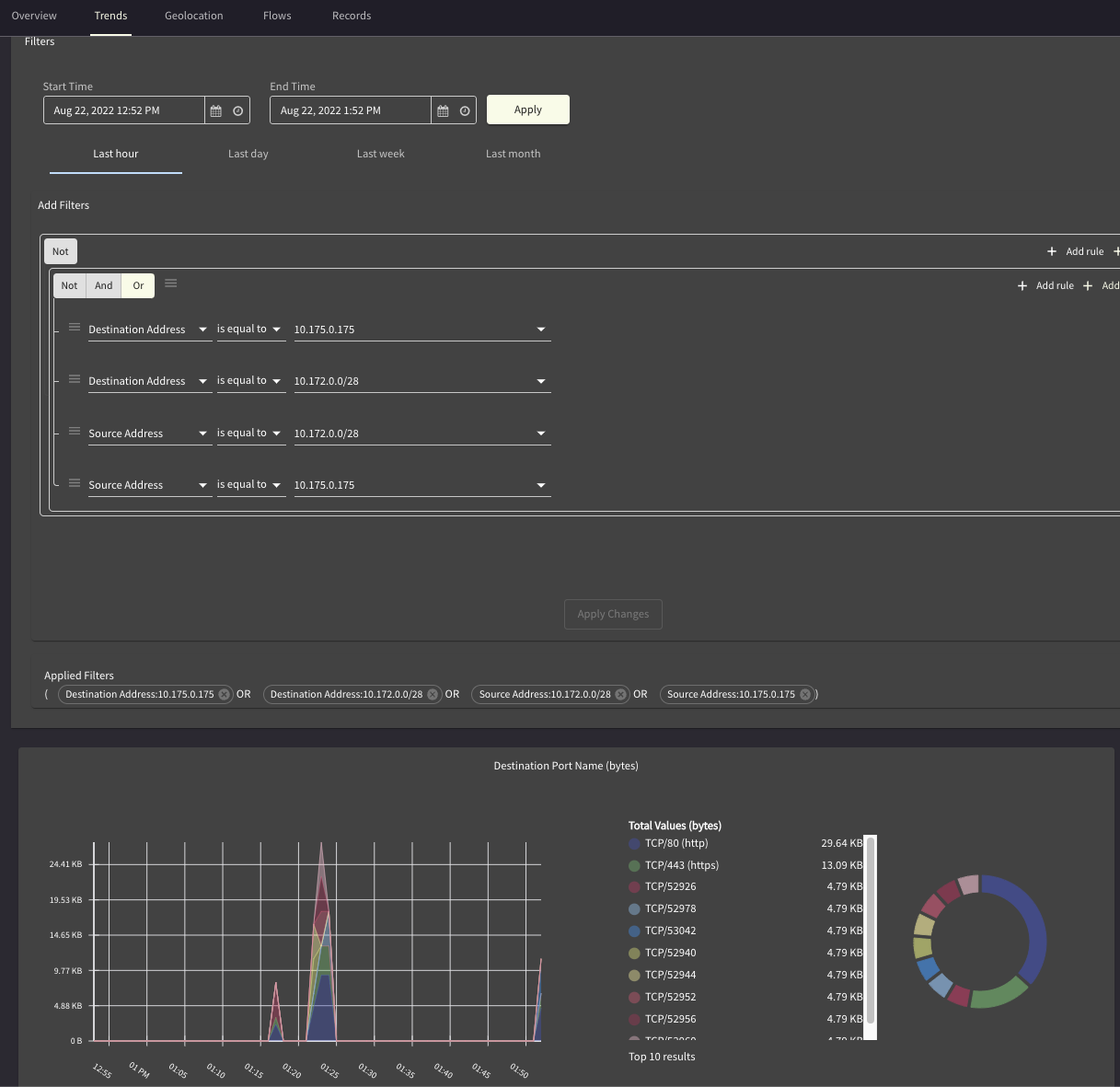

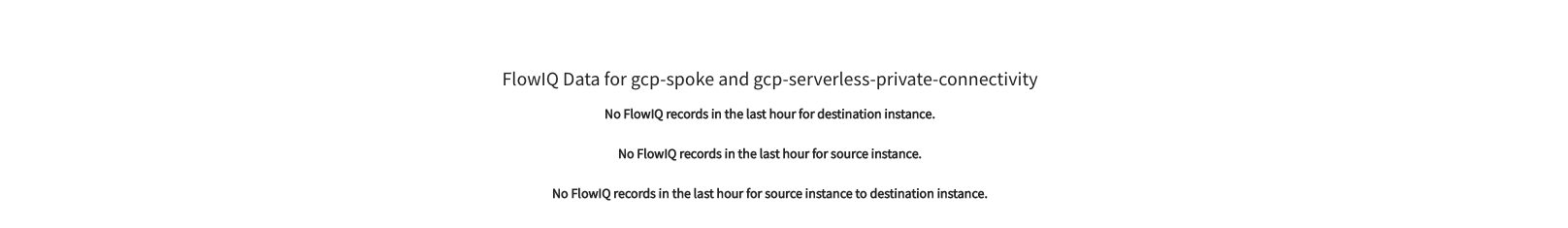

Flow Visibility

How much traffic was transferred?

When ? How ?

Between S2C and Ingress Endpoint of GCP Function

Between Function Egress (Connector) Subnet and Backend (10.6.1.21)

Where was it seen, where is it going out, number of packets, throughput

Heatmap for this communication ? Trends ?

Routing DB

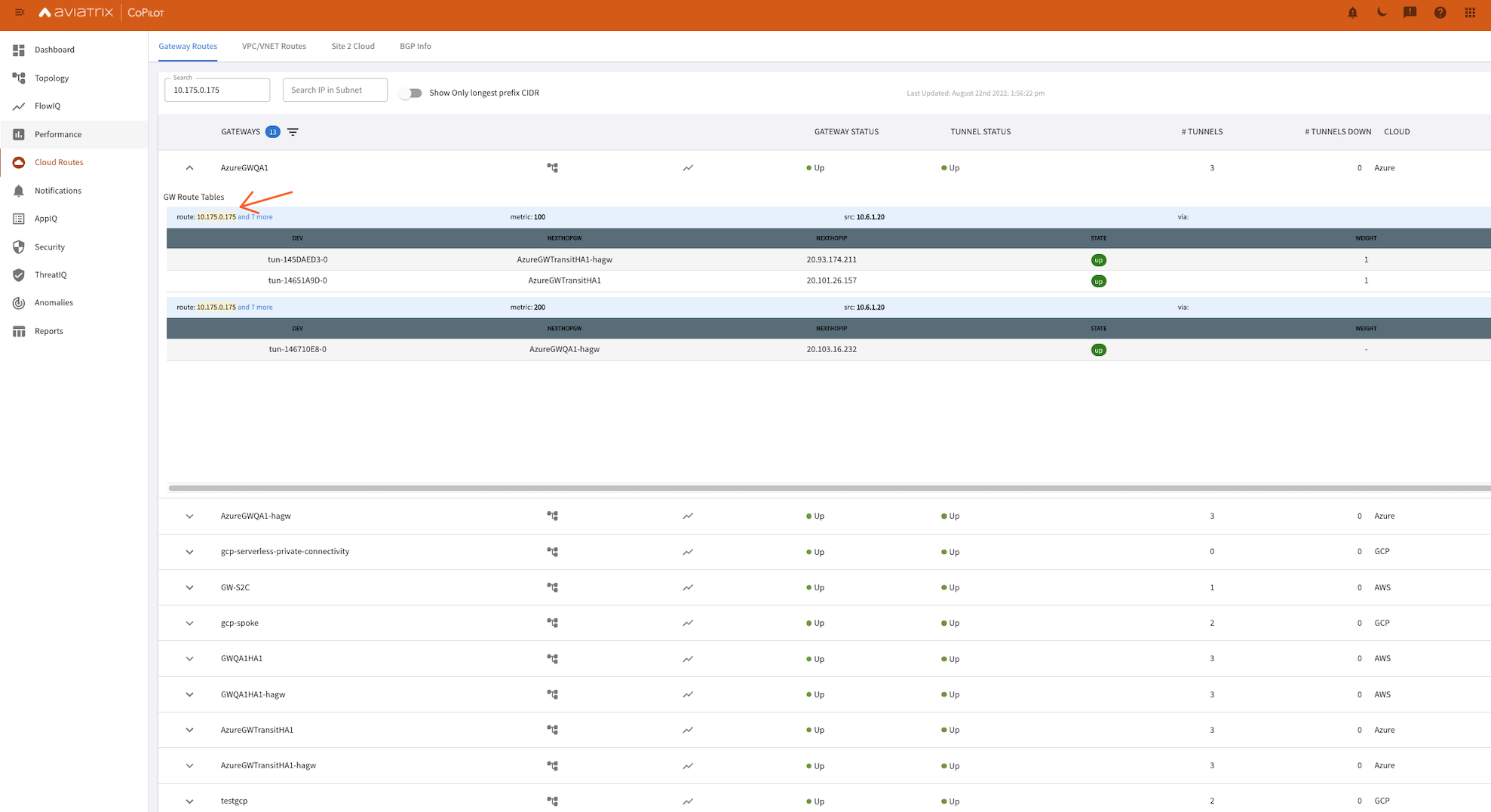

Here I was trying to see if the Ingress Endpoint for my GCP Function (10.175.0.175) is reachable from Gateways throughout my network:

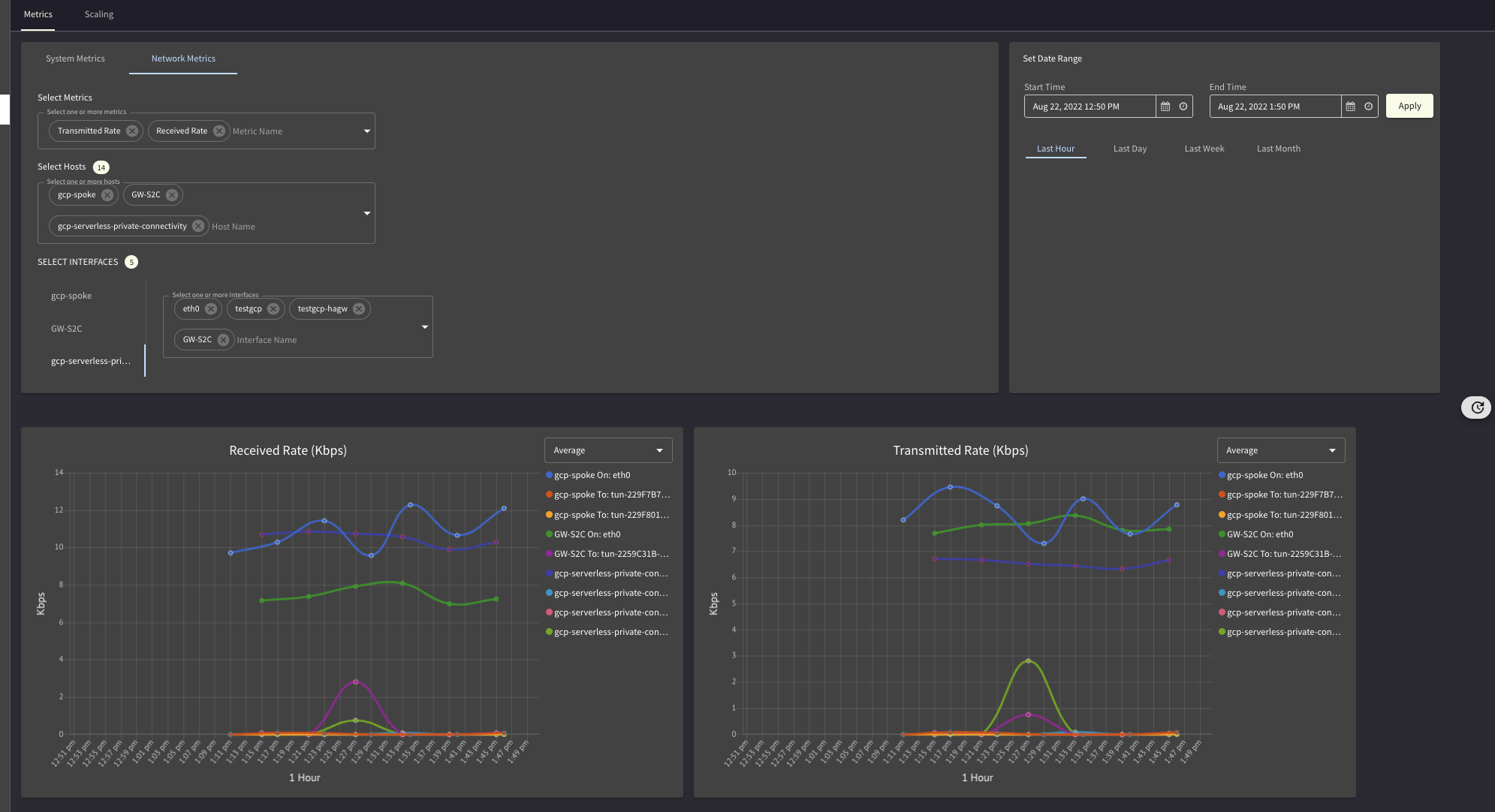

Traffic Graphs

Any sudden change in the traffic ?

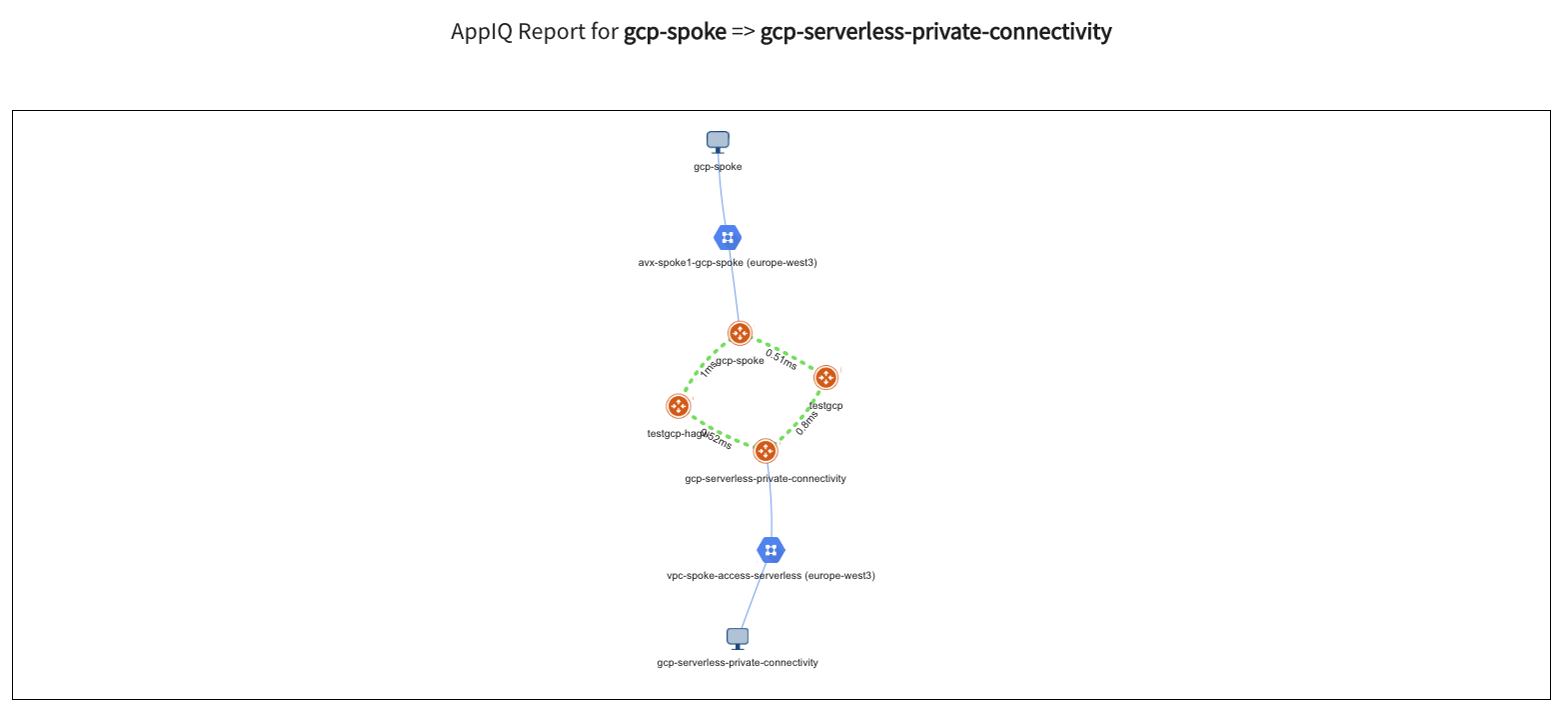

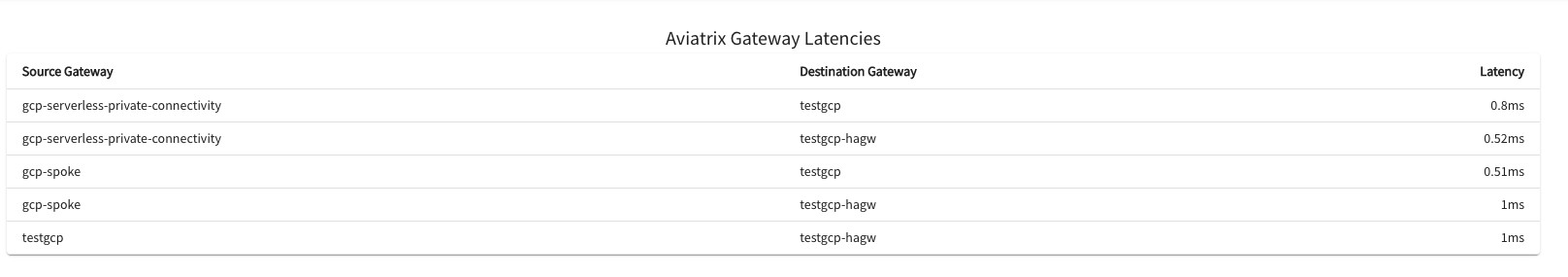

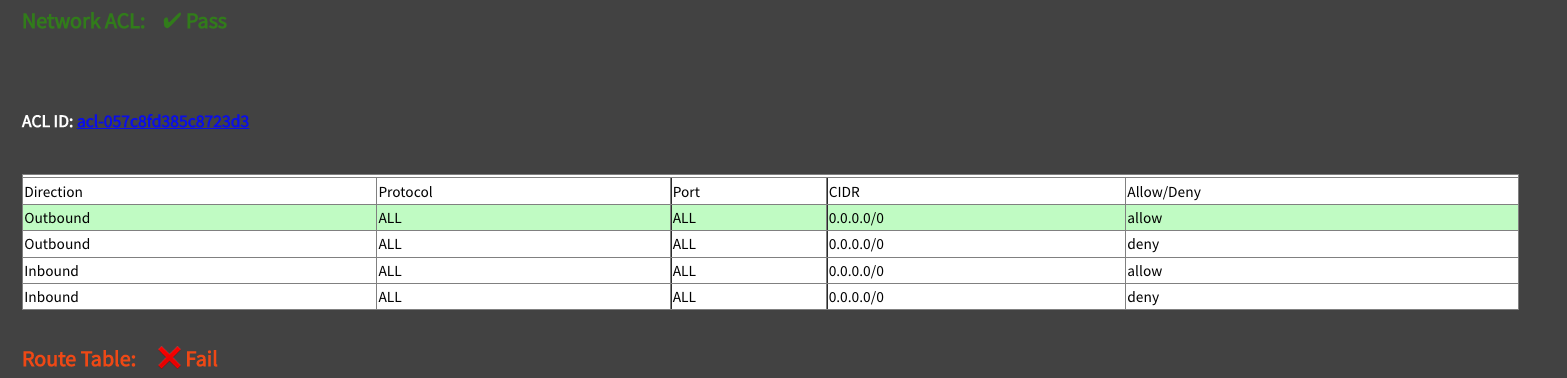

AppIQ

I want to simulate if Control Plane & Data Plane wise there are issues in the path between 2 x Gateways.

See if I forgot to configure something.

Maybe I misconfigured a security group somewhere

Anomaly Detection

If anything changed in your traffic patterns get alerted about it before the impact becomes visible and propagates.

Extra - VPC Serverless / Egress + Tags

VPC Connectors come pre-tagged by GPC for Firewalling and PBR purposes.

Universal network tag (vpc-connector): Applies to all existing connectors and any connectors made in the future.

Unique network tag (vpc-connector-REGION-CONNECTOR_NAME): Applies to the connector CONNECTOR_NAME in the region REGION.